AI in Cybersecurity: Protection or Risk in 2026

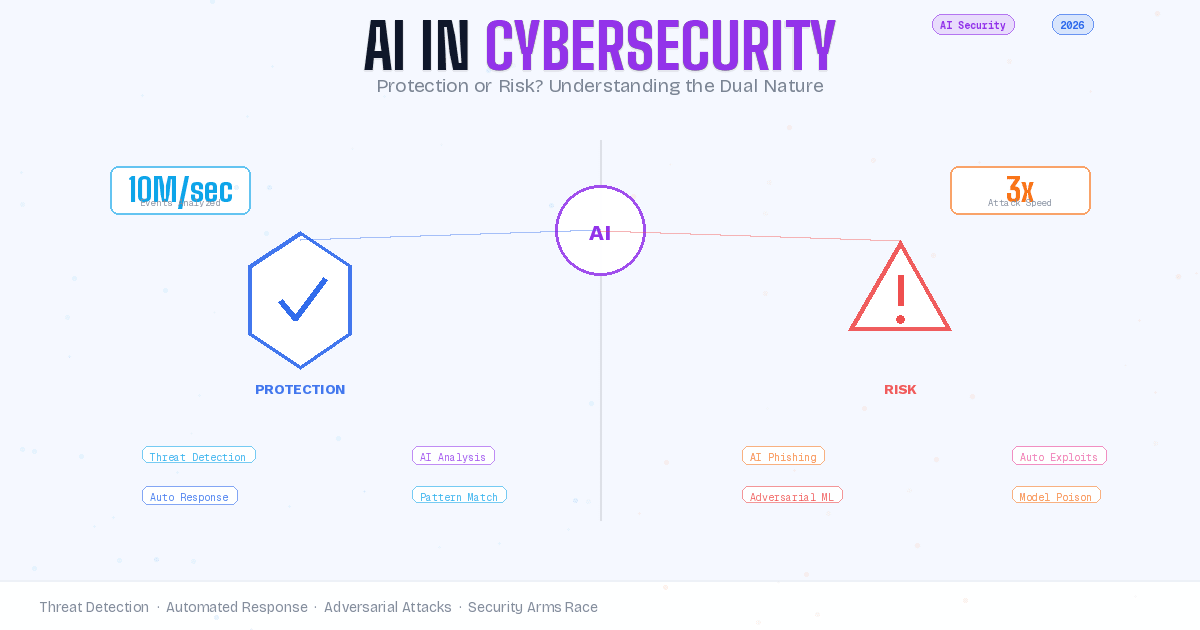

Quick Answer: AI is simultaneously the most powerful defensive tool and the most concerning offensive weapon in cybersecurity today. Organizations use AI to detect threats at speeds humans cannot match, while attackers use the same technology to create sophisticated phishing campaigns, bypass security systems, and automate large-scale attacks. The question is not whether AI belongs in cybersecurity, but how to harness its protective capabilities while defending against its weaponization.

The relationship between artificial intelligence and cybersecurity has reached a critical inflection point. Security teams deploy AI-powered tools that analyze millions of events per second, identifying patterns and anomalies that would be impossible for human analysts to catch. These same capabilities, when turned toward malicious purposes, enable threat actors to operate at scales and sophistication levels previously unattainable.

This dual-use nature of AI in cybersecurity creates a fundamental paradox. The technology that makes modern defense possible also makes modern attacks more dangerous. Understanding this paradox is essential for security professionals, business leaders, and anyone responsible for protecting digital assets. This article examines both sides of the equation with equal rigor, because effective security strategy requires acknowledging both the promise and the peril.

How AI Strengthens Cybersecurity Defense

AI transforms cybersecurity defense through three primary mechanisms: speed, scale, and pattern recognition. Human security analysts can process hundreds of security events per day. AI systems analyze millions of events per second, identifying threats in real time that would otherwise go undetected until significant damage had occurred.

Machine learning models excel at detecting anomalous behavior within network traffic, user activity, and system operations. These models establish baseline patterns of normal activity and flag deviations that may indicate compromise. A sudden spike in data transfers from a user account, login attempts from unusual geographic locations, or system processes behaving differently than their established patterns all trigger alerts that warrant investigation.

The pattern recognition capability extends beyond simple threshold violations. Modern AI security systems identify complex attack chains where individual actions appear benign but collectively indicate malicious intent. An attacker might escalate privileges slowly over weeks, exfiltrate data in small amounts to avoid detection thresholds, or use legitimate administrative tools in suspicious combinations. AI systems trained on historical attack data recognize these patterns even when individual steps fall within normal operating parameters.

Natural language processing has advanced phishing detection significantly. AI models analyze email content, sender reputation, link destinations, and behavioral context to identify sophisticated phishing attempts that bypass traditional signature-based filters. These systems catch zero-day phishing campaigns that have never been seen before by recognizing the underlying structure and intent of the message rather than relying on known malicious indicators.

How AI Enables More Dangerous Attacks

The same capabilities that make AI valuable for defense make it dangerous in the hands of attackers. Large language models generate convincing phishing emails at scale, automatically adapting messages to target specific individuals based on publicly available information about their roles, interests, and communication patterns. These AI-generated phishing campaigns achieve success rates significantly higher than traditional mass phishing attempts.

Attackers use machine learning to identify vulnerabilities in target systems more efficiently than manual reconnaissance. AI-powered scanning tools analyze application behavior, network configurations, and security controls to identify the most promising attack vectors. This automated vulnerability discovery accelerates the timeline from system deployment to exploitation, reducing the window during which organizations can patch known vulnerabilities before they are actively exploited.

Adversarial machine learning presents a particularly concerning threat category. Attackers craft inputs specifically designed to fool AI security systems, causing them to misclassify malicious activity as benign or benign activity as malicious. These adversarial attacks exploit the mathematical nature of how AI models make decisions, finding edge cases where small, carefully crafted modifications to input data produce dramatically different classification results.

The automation AI provides to attackers scales not just the volume of attacks but their customization. Where traditional attacks might target thousands of victims with identical messages, AI-powered attacks can generate thousands of unique variants, each tailored to its specific recipient. This diversity makes pattern-based detection more difficult and forces security systems to evaluate each attempt on its individual merits rather than matching against known attack signatures.

AI-Powered Threat Detection and Response

Security operations centers increasingly rely on AI to prioritize alerts and recommend responses. The volume of security events generated by modern networks far exceeds human analyst capacity to investigate. AI systems triage these events, separating genuine threats from false positives and ranking incidents by severity and confidence level. This allows human analysts to focus their expertise on the most critical and complex threats rather than drowning in alert noise.

Behavioral analytics powered by AI detect insider threats and compromised credentials by identifying deviations from established user behavior patterns. When an employee account suddenly accesses systems or data outside their normal scope of responsibility, downloads unusually large data sets, or exhibits login patterns inconsistent with their historical behavior, AI systems flag these activities for investigation. These behavioral signals often detect compromised accounts before the attacker has completed their objectives.

Automated response capabilities enable AI systems to take defensive actions without waiting for human approval. When certain high-confidence threat indicators are detected, AI systems can quarantine affected systems, block suspicious network traffic, revoke compromised credentials, or trigger incident response workflows automatically. This rapid response contains threats before they can spread laterally through the network or exfiltrate significant data.

The speed advantage AI provides in threat response is substantial. Human-driven incident response typically measures response time in hours or days. AI-driven automated response operates in seconds or minutes. For threats like ransomware, where encryption can lock an entire network in under an hour, the difference between a seconds-based response and a hours-based response determines whether the attack succeeds or is contained before causing significant damage.

Adversarial AI and the Arms Race

The field of adversarial machine learning studies how attackers manipulate AI systems and how defenders can make these systems more robust. Researchers have demonstrated that AI models can be fooled by carefully crafted inputs that are indistinguishable from legitimate data to human observers but cause the AI to make incorrect classifications. This vulnerability affects nearly all types of machine learning models, from image recognition to malware detection to network intrusion detection systems.

Defenders respond to adversarial attacks through adversarial training, where AI models are trained on both normal data and adversarially crafted examples. This exposure to attack attempts during training makes the models more robust to similar attacks in production. However, this defense is not absolute. Attackers can probe trained models to identify new adversarial examples that the training process did not cover, creating an ongoing cycle of attack and defense.

Model poisoning represents another category of adversarial threat. If attackers can inject malicious data into the datasets used to train AI security models, they can bias the models to miss specific attack types or misclassify malicious activity as benign. This supply chain attack on the AI training process is particularly concerning for models trained on data from external sources or crowdsourced threat intelligence.

The arms race dynamic between offensive and defensive AI capabilities accelerates continuously. As defenders develop more sophisticated detection systems, attackers develop more sophisticated evasion techniques. As attackers develop new exploit automation tools, defenders develop new automated defense mechanisms. This cycle shows no signs of slowing, and organizations must plan for continuous evolution of both threats and defenses rather than treating security as a static implementation.

Security Automation Benefits and Limitations

Automation powered by AI addresses the fundamental resource constraint in cybersecurity: the shortage of skilled security professionals. Organizations worldwide report difficulty hiring and retaining qualified security analysts, yet the volume of threats and the complexity of attack techniques continue to increase. AI automation multiplies the effectiveness of the security staff organizations do have by handling routine analysis tasks and escalating only the most critical or ambiguous situations to human experts.

The limitations of automation are equally important to understand. AI systems excel at pattern recognition and statistical analysis but lack the contextual understanding and creative thinking that human analysts provide. A sophisticated targeted attack that combines social engineering, insider knowledge, and novel techniques may not match known attack patterns closely enough for AI systems to recognize it as malicious. Human analysts who understand the business context and can reason about attacker motivation and objectives remain essential for detecting and responding to these advanced threats.

False positives present a persistent challenge for AI security systems. High sensitivity to potential threats generates alerts for activities that, in context, are perfectly legitimate. If security teams respond to every AI-generated alert, they quickly suffer alert fatigue and begin ignoring warnings. If they tune the system to reduce false positives, they risk missing genuine threats. Finding the right balance requires ongoing calibration based on the specific risk profile and tolerance of each organization.

AI automation works best when combined with human expertise in a collaborative model. The AI handles high-volume, repetitive tasks and initial threat assessment. Human analysts handle complex investigation, strategic decision-making, and response coordination. This division of labor allows each to operate in their areas of strength while compensating for the limitations of the other.

Security Risks Within AI Systems Themselves

AI systems deployed for security purposes become attractive targets themselves. If attackers can compromise the AI security stack, they can blind defenders while moving through the network undetected. This creates a new category of critical infrastructure that requires protection: the AI models, training data, and inference systems that power security operations.

Data poisoning attacks target the training data used to build AI security models. By injecting carefully crafted malicious samples into training datasets, attackers can bias models to miss specific attack signatures or misclassify certain activities. Public threat intelligence feeds, crowdsourced malware repositories, and user-reported security events all represent potential vectors for data poisoning if organizations do not properly validate and sanitize training data sources.

Model extraction represents another threat category. Attackers probe AI security systems with carefully chosen inputs and observe the outputs to reverse-engineer the decision-making logic of the model. With enough queries, attackers can build a replica of the security model and use it to develop attacks specifically designed to evade detection. Protecting against model extraction requires rate limiting queries, monitoring for unusual query patterns, and limiting the information returned in model outputs.

The black box nature of many AI systems creates operational security risks even when the systems are not under attack. Deep learning models often make decisions based on complex mathematical relationships that are difficult or impossible for humans to interpret. When an AI security system flags activity as suspicious, security teams may not fully understand why, making it harder to validate the finding, communicate the risk to stakeholders, or learn from the incident to improve future detection.

Striking the Right Balance

Organizations must approach AI in cybersecurity with eyes wide open to both its capabilities and its limitations. The technology provides genuine defensive advantages that are increasingly necessary to combat modern threats. Rejecting AI-powered security tools because of concerns about their risks leaves organizations vulnerable to attacks that leverage AI from the offensive side.

A balanced approach implements AI security tools with appropriate oversight and validation. Human analysts review AI-generated findings, particularly for high-stakes decisions like quarantining critical systems or reporting incidents to regulators. Security teams test AI systems regularly against adversarial examples and novel attack techniques to identify blind spots and vulnerabilities. Organizations maintain diverse defense strategies rather than relying entirely on AI-powered tools, ensuring that if one defensive layer fails, others remain effective.

Transparency in AI security systems builds trust and improves effectiveness. When security teams understand how their AI tools make decisions, they can better calibrate confidence in those decisions, identify potential failure modes, and explain security incidents to executives and boards. This transparency sometimes requires sacrificing some algorithmic sophistication for explainability, but the operational benefits of understandable security decisions often justify this tradeoff.

Continuous evaluation and improvement are essential for AI security systems. Threat landscapes evolve constantly, and AI models trained on historical attack data may not recognize emerging threats. Organizations should plan for regular model retraining, ongoing testing against new attack techniques, and periodic review of automation rules and thresholds to ensure they remain appropriate as the security environment changes.

The Future Landscape

The trajectory of AI in cybersecurity points toward increased autonomy and sophistication on both offensive and defensive sides. Defensive AI systems will likely gain more authority to take protective actions without human approval, as the speed advantage of automated response becomes more critical against increasingly rapid attacks. This increased autonomy will require robust safeguards to prevent AI systems from causing unintended disruption to legitimate business operations.

Offensive AI capabilities will continue to lower the barrier to entry for sophisticated attacks. Tools that currently require significant expertise to develop and deploy will become more accessible and automated. This democratization of attack capabilities means that less sophisticated threat actors will be able to execute attacks that previously only well-resourced groups could accomplish. Defenders must prepare for a world where advanced persistent threat techniques become commoditized.

Regulatory frameworks around AI in cybersecurity are beginning to emerge. Governments are developing standards for how AI security systems should be tested, what explainability requirements they must meet, and what oversight humans must maintain over AI security decisions. These regulations will shape how organizations can deploy AI security tools and what liability they face when those tools fail or cause unintended harm.

The integration of AI across all aspects of cybersecurity operations will deepen. Current AI implementations focus primarily on threat detection and initial response. Future systems will span the entire security lifecycle, from risk assessment and security architecture design through incident response, forensic investigation, and lessons learned analysis. This comprehensive integration will make AI an even more fundamental component of security programs, increasing both the benefits and the risks associated with AI security tools.

Practical Implementation Guidance

Organizations implementing AI security tools should start with clear objectives and success criteria. Define specifically what security problems the AI tools should address and how success will be measured. Vague goals like "improve security" provide insufficient guidance for tool selection, configuration, and evaluation. Specific goals like "reduce time to detect credential compromise from 48 hours to 4 hours" or "decrease false positive rate in phishing detection by 30%" create accountability and allow meaningful assessment of whether the AI implementation delivers value.

Pilot programs allow organizations to test AI security tools in controlled environments before broad deployment. Run new AI systems in parallel with existing security measures initially, comparing their findings and identifying where they agree, where they disagree, and which produces more accurate results. This parallel operation builds confidence in the AI system's reliability and helps security teams understand its strengths and weaknesses before making it a primary security control.

Integration with existing security infrastructure is critical for AI security tools to deliver value. AI systems that operate in isolation, generating their own alerts without coordination with other security tools, create additional work for security teams rather than reducing it. Ensure AI security platforms can feed alerts into your security information and event management (SIEM) system, integrate with your incident response workflows, and coordinate with your other security controls to provide unified threat detection and response.

Training security staff on AI tools is as important as implementing the tools themselves. Security analysts need to understand how the AI systems work, what their limitations are, and how to interpret and validate their findings. This training should cover both the technical operation of the tools and the broader conceptual understanding of AI capabilities and limitations. Security teams that understand their AI tools use them more effectively and maintain appropriate skepticism about AI-generated findings.

Related Reading: AI Across Security and Development

AI's impact extends far beyond cybersecurity into how we live and work daily. For a comprehensive look at how AI assistants are changing household and personal technology while raising similar privacy and security questions, see our guide on AI Assistants in Everyday Life: Convenience, Privacy, and Smart Living, which examines the privacy tradeoffs millions of users make when adopting voice-controlled smart home technology.

Small businesses face unique challenges implementing both AI tools and cybersecurity defenses with limited resources. Our comprehensive Cybersecurity for Small Businesses in 2026 guide provides practical strategies for protecting organizations without enterprise security budgets, including how to evaluate and implement AI-powered security tools cost-effectively.

AI code generation presents a particularly relevant case study for AI security risks. As explored in our article on AI Code Generation in 2026, AI-written code can introduce security vulnerabilities if developers accept suggestions without thorough review. Understanding how AI tools are reshaping software development helps contextualize the broader security implications of AI adoption across technology domains.

Frequently Asked Questions

Is AI-powered cybersecurity more effective than traditional security tools?

AI-powered security tools excel at detecting patterns and anomalies at speeds and scales impossible for traditional signature-based systems. However, they are not inherently superior for all security tasks. AI tools work best when combined with traditional security measures in a layered defense strategy. Traditional tools provide reliable protection against known threats, while AI tools add capability for detecting novel attacks and processing large volumes of security data. The most effective security programs use both.

Can AI security systems be hacked or fooled?

Yes. AI security systems are vulnerable to adversarial attacks designed to cause misclassification, data poisoning that biases training data, and model extraction that reveals decision-making logic. These vulnerabilities are well-documented in security research. However, AI vendors and security teams actively develop countermeasures including adversarial training, input validation, and query monitoring. Organizations should assume AI security systems have vulnerabilities and implement appropriate oversight and validation of AI-generated security findings.

Do I need AI security tools if I have a small organization?

Small organizations can benefit from AI security tools, particularly cloud-based services that provide enterprise-grade protection at accessible price points. However, AI tools should not be the first security investment. Implement fundamental security measures first: multi-factor authentication, employee training, secure backups, and patch management. Once these basics are in place, AI-powered tools can add valuable detection and response capabilities without requiring dedicated security staff.

How do attackers use AI in cyberattacks?

Attackers use AI for several purposes: generating convincing phishing messages at scale, automating vulnerability discovery, creating polymorphic malware that changes to evade detection, and developing adversarial examples that fool AI security systems. Large language models make sophisticated social engineering attacks accessible to less skilled attackers. Machine learning accelerates the reconnaissance and exploitation phases of attacks. These capabilities are actively used in real-world attacks, not just theoretical threats.

What is adversarial machine learning in cybersecurity?

Adversarial machine learning studies how attackers manipulate AI systems and how to make AI more robust against manipulation. Adversarial examples are inputs crafted to cause AI models to make incorrect classifications. In cybersecurity, this might mean malware modified to evade AI-powered detection or network traffic disguised to appear benign to AI monitoring systems. Defenders counter with adversarial training and input validation, but this remains an active area of research with no complete solution.

Should humans always review AI security decisions before taking action?

It depends on the decision's impact and confidence level. Low-risk, high-confidence actions like blocking a known malicious IP address can be automated safely. High-impact decisions like quarantining critical business systems should require human approval. Organizations should define clear escalation criteria: what confidence thresholds and impact levels trigger automated response versus human review. This balance evolves as AI systems prove themselves reliable and as threats require faster response than human approval cycles allow.

How often should AI security models be retrained or updated?

AI security models should be evaluated for retraining at least quarterly, with updates whenever significant changes occur in the threat landscape, business operations, or IT infrastructure. Major new attack campaigns, zero-day vulnerabilities, or organizational changes like mergers may warrant immediate retraining. Continuous learning systems update in real time, but even these require periodic comprehensive retraining on validated datasets. The retraining schedule should balance model freshness against the risk of incorporating poisoned data.

What regulations govern AI use in cybersecurity?

Regulatory frameworks for AI in cybersecurity are emerging but not yet comprehensive in most jurisdictions. EU AI Act provisions address high-risk AI systems including some security applications. NIST AI Risk Management Framework provides voluntary guidance for US organizations. Industry-specific regulations like GDPR, HIPAA, and PCI DSS may impose requirements on how AI processes sensitive data. Organizations should monitor regulatory developments in their jurisdictions and industries, as this area is evolving rapidly.

This article is part of our Artificial Intelligence series exploring how AI technologies reshape security, development, and daily life. Related reading: Machine Learning Security Best Practices, AI Transparency and Explainability, and The Ethics of Automated Security Decisions.